Ollama 聊天

通过 Ollama,您可以在本地运行各种大型语言模型 (LLM) 并从中生成文本。

Spring AI 通过 OllamaChatModel API 支持 Ollama 聊天完成功能。

|

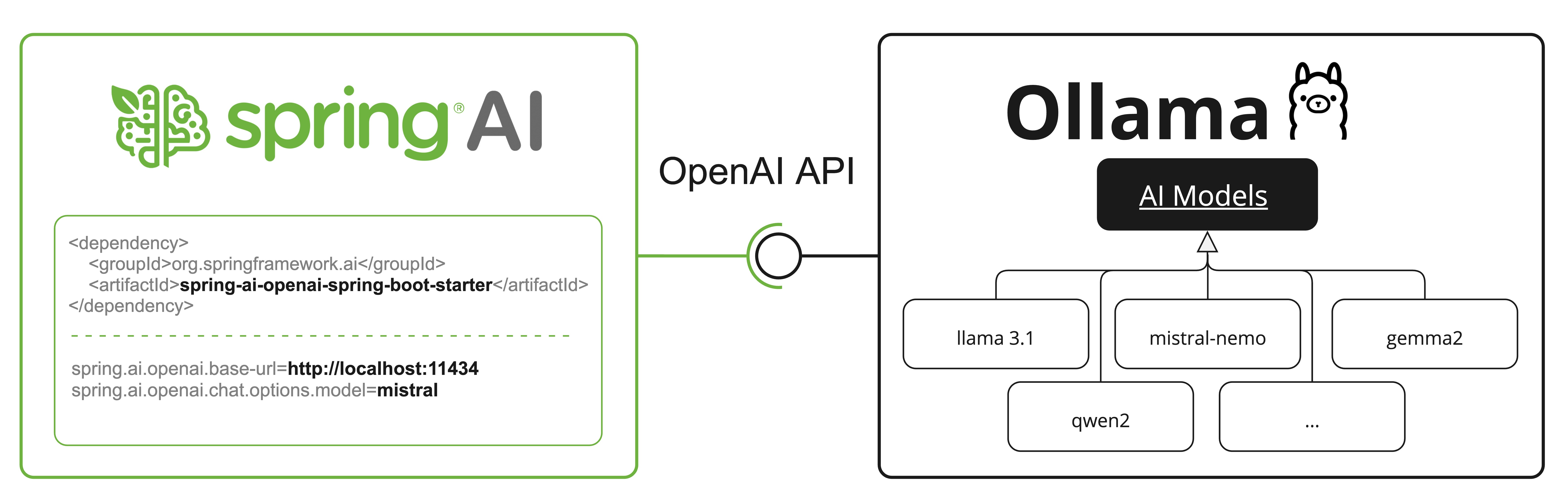

Ollama 还提供一个与 OpenAI API 兼容的端点。 _openai_api_compatibility 部分解释了如何使用 Spring AI OpenAI 连接到 Ollama 服务器。 |

先决条件

您首先需要访问一个 Ollama 实例。有几个选项,包括:

-

下载并安装 Ollama 到您的本地机器。

-

通过 Testcontainers 配置并运行 Ollama。

-

通过 Kubernetes 服务绑定 绑定到 Ollama 实例。

您可以从 Ollama 模型库 中拉取您希望在应用程序中使用的模型:

ollama pull <model-name>您还可以拉取数千个免费的 GGUF Hugging Face 模型:

ollama pull hf.co/<username>/<model-repository>或者,您可以启用自动下载任何所需模型的选项:auto-pulling-models。

自动配置

|

Spring AI 自动配置、启动器模块的工件名称发生了重大变化。 请参阅 升级说明 以获取更多信息。 |

Spring AI 为 Ollama 聊天集成提供了 Spring Boot 自动配置。

要启用它,请将以下依赖项添加到您的项目的 Maven pom.xml 或 Gradle build.gradle 构建文件中:

-

Maven

-

Gradle

<dependency>

<groupId>org.springframework.ai</groupId>

<artifactId>spring-ai-starter-model-ollama</artifactId>

</dependency>dependencies {

implementation 'org.springframework.ai:spring-ai-starter-model-ollama'

}|

请参阅 依赖管理 部分,将 Spring AI BOM 添加到您的构建文件中。 |

基本属性

前缀 spring.ai.ollama 是配置与 Ollama 连接的属性前缀。

属性 |

描述 |

默认值 |

spring.ai.ollama.base-url |

Ollama API 服务器运行的基本 URL。 |

以下是用于初始化 Ollama 集成和auto-pulling-models的属性。

属性 |

描述 |

默认值 |

spring.ai.ollama.init.pull-model-strategy |

启动时是否以及如何拉取模型。 |

|

spring.ai.ollama.init.timeout |

等待模型拉取的时间。 |

|

spring.ai.ollama.init.max-retries |

模型拉取操作的最大重试次数。 |

|

spring.ai.ollama.init.chat.include |

在初始化任务中包含此类型的模型。 |

|

spring.ai.ollama.init.chat.additional-models |

除了通过默认属性配置的模型之外,要初始化的其他模型。 |

|

聊天属性

|

聊天自动配置的启用和禁用现在通过前缀为 |

前缀 spring.ai.ollama.chat.options 是配置 Ollama 聊天模型的属性前缀。

它包括 Ollama 请求(高级)参数,例如 model、keep-alive 和 format,以及 Ollama 模型 options 属性。

以下是 Ollama 聊天模型的高级请求参数:

属性 |

描述 |

默认值 |

spring.ai.ollama.chat.enabled (已移除且不再有效) |

启用 Ollama 聊天模型。 |

true |

spring.ai.model.chat |

启用 Ollama 聊天模型。 |

ollama |

spring.ai.ollama.chat.options.model |

要使用的 支持模型 的名称。 |

mistral |

spring.ai.ollama.chat.options.format |

返回响应的格式。目前,唯一接受的值是 |

- |

spring.ai.ollama.chat.options.keep_alive |

控制模型在请求后在内存中加载的时间。 |

5m |

其余的 options 属性基于 Ollama 有效参数和值 和 Ollama 类型。默认值基于 Ollama 类型默认值。

属性 |

描述 |

默认值 |

spring.ai.ollama.chat.options.numa |

是否使用 NUMA。 |

false |

spring.ai.ollama.chat.options.num-ctx |

设置用于生成下一个 token 的上下文窗口大小。 |

2048 |

spring.ai.ollama.chat.options.num-batch |

提示处理最大批次大小。 |

512 |

spring.ai.ollama.chat.options.num-gpu |

发送到 GPU 的层数。在 macOS 上默认为 1 以启用 metal 支持,0 为禁用。这里的 1 表示 NumGPU 应动态设置 |

-1 |

spring.ai.ollama.chat.options.main-gpu |

当使用多个 GPU 时,此选项控制哪个 GPU 用于小张量,对于这些小张量,在所有 GPU 上拆分计算的开销不值得。相关 GPU 将使用稍微多一点的 VRAM 来存储临时结果的暂存缓冲区。 |

0 |

spring.ai.ollama.chat.options.low-vram |

- |

false |

spring.ai.ollama.chat.options.f16-kv |

- |

true |

spring.ai.ollama.chat.options.logits-all |

返回所有 token 的 logits,而不仅仅是最后一个。要使补全返回 logprobs,此项必须为 true。 |

- |

spring.ai.ollama.chat.options.vocab-only |

仅加载词汇表,不加载权重。 |

- |

spring.ai.ollama.chat.options.use-mmap |

默认情况下,模型被映射到内存中,这允许系统根据需要仅加载模型的必要部分。但是,如果模型大于您的总 RAM 量,或者您的系统可用内存不足,使用 mmap 可能会增加页面换出的风险,从而对性能产生负面影响。禁用 mmap 会导致加载时间变慢,但如果您不使用 mlock,可能会减少页面换出。请注意,如果模型大于总 RAM 量,关闭 mmap 将阻止模型加载。 |

null |

spring.ai.ollama.chat.options.use-mlock |

将模型锁定在内存中,防止它在内存映射时被交换出去。这可以提高性能,但会牺牲内存映射的一些优点,因为它需要更多的 RAM 来运行,并且可能会减慢模型加载到 RAM 中的时间。 |

false |

spring.ai.ollama.chat.options.num-thread |

设置计算期间使用的线程数。默认情况下,Ollama 会检测此值以获得最佳性能。建议将此值设置为系统拥有的物理 CPU 核心数(而不是逻辑核心数)。0 = 让运行时决定 |

0 |

spring.ai.ollama.chat.options.num-keep |

- |

4 |

spring.ai.ollama.chat.options.seed |

设置用于生成的随机数种子。将其设置为特定数字将使模型为相同的提示生成相同的文本。 |

-1 |

spring.ai.ollama.options.num-predict |

生成文本时预测的最大 token 数。(-1 = 无限生成,-2 = 填充上下文) |

-1 |

spring.ai.ollama.chat.options.top-k |

降低生成无意义内容的概率。较高的值(例如 100)将提供更多样化的答案,而较低的值(例如 10)将更保守。 |

40 |

spring.ai.ollama.chat.options.top-p |

与 top-k 协同工作。较高的值(例如 0.95)将导致更多样化的文本,而较低的值(例如 0.5)将生成更集中和保守的文本。 |

0.9 |

spring.ai.ollama.chat.options.min-p |

top_p 的替代方案,旨在确保质量和多样性的平衡。参数 p 表示 token 被考虑的最小概率,相对于最有可能的 token 的概率。例如,当 p=0.05 且最有可能的 token 的概率为 0.9 时,值小于 0.045 的 logits 将被过滤掉。 |

0.0 |

spring.ai.ollama.chat.options.tfs-z |

尾部无采样用于减少不太可能出现的 token 对输出的影响。较高的值(例如 2.0)将更多地减少影响,而 1.0 的值将禁用此设置。 |

1.0 |

spring.ai.ollama.chat.options.typical-p |

- |

1.0 |

spring.ai.ollama.chat.options.repeat-last-n |

设置模型回溯以防止重复的距离。(默认值:64,0 = 禁用,-1 = num_ctx) |

64 |

spring.ai.ollama.chat.options.temperature |

模型的温度。增加温度将使模型更具创造性地回答。 |

0.8 |

spring.ai.ollama.chat.options.repeat-penalty |

设置惩罚重复的强度。较高的值(例如 1.5)将更强烈地惩罚重复,而较低的值(例如 0.9)将更宽松。 |

1.1 |

spring.ai.ollama.chat.options.presence-penalty |

- |

0.0 |

spring.ai.ollama.chat.options.frequency-penalty |

- |

0.0 |

spring.ai.ollama.chat.options.mirostat |

启用 Mirostat 采样以控制困惑度。(默认值:0,0 = 禁用,1 = Mirostat,2 = Mirostat 2.0) |

0 |

spring.ai.ollama.chat.options.mirostat-tau |

控制输出连贯性和多样性之间的平衡。较低的值将导致更集中和连贯的文本。 |

5.0 |

spring.ai.ollama.chat.options.mirostat-eta |

影响算法对生成文本反馈的响应速度。较低的学习率将导致较慢的调整,而较高的学习率将使算法更具响应性。 |

0.1 |

spring.ai.ollama.chat.options.penalize-newline |

- |

true |

spring.ai.ollama.chat.options.stop |

设置要使用的停止序列。当遇到此模式时,LLM 将停止生成文本并返回。可以通过在模型文件中指定多个单独的停止参数来设置多个停止模式。 |

- |

spring.ai.ollama.chat.options.functions |

函数列表,通过其名称标识,用于在单个提示请求中启用函数调用。具有这些名称的函数必须存在于 functionCallbacks 注册表中。 |

- |

spring.ai.ollama.chat.options.proxy-tool-calls |

如果为 true,Spring AI 将不会在内部处理函数调用,而是将它们代理给客户端。然后客户端负责处理函数调用,将它们分派给适当的函数,并返回结果。如果为 false(默认值),Spring AI 将在内部处理函数调用。仅适用于支持函数调用的聊天模型 |

false |

|

所有以 |

运行时选项

OllamaOptions.java 类提供了模型配置,例如要使用的模型、温度等。

启动时,可以使用 OllamaChatModel(api, options) 构造函数或 spring.ai.ollama.chat.options.* 属性配置默认选项。

运行时,您可以通过向 Prompt 调用添加新的、请求特定的选项来覆盖默认选项。

例如,要覆盖特定请求的默认模型和温度:

ChatResponse response = chatModel.call(

new Prompt(

"Generate the names of 5 famous pirates.",

OllamaOptions.builder()

.model(OllamaModel.LLAMA3_1)

.temperature(0.4)

.build()

));|

除了模型特定的 OllamaOptions,您还可以使用便携式的 ChatOptions 实例,通过 ChatOptions#builder() 创建。 |

自动拉取模型

当您的 Ollama 实例中没有模型时,Spring AI Ollama 可以自动拉取模型。 此功能对于开发和测试以及将应用程序部署到新环境特别有用。

|

您还可以按名称拉取数千个免费的 GGUF Hugging Face 模型。 |

拉取模型有三种策略:

-

always(在PullModelStrategy.ALWAYS中定义):始终拉取模型,即使它已经可用。用于确保您使用的是最新版本的模型。 -

when_missing(在PullModelStrategy.WHEN_MISSING中定义):仅当模型尚不可用时才拉取。这可能导致使用旧版本的模型。 -

never(在PullModelStrategy.NEVER中定义):从不自动拉取模型。

由于下载模型可能存在延迟,因此不建议在生产环境中使用自动拉取。相反,请考虑提前评估和预下载必要的模型。

通过配置属性和默认选项定义的所有模型都可以在启动时自动拉取。 您可以使用配置属性配置拉取策略、超时和最大重试次数:

spring:

ai:

ollama:

init:

pull-model-strategy: always

timeout: 60s

max-retries: 1应用程序将不会完成其初始化,直到所有指定的模型在 Ollama 中可用。根据模型大小和互联网连接速度,这可能会显著减慢应用程序的启动时间。

您可以在启动时初始化其他模型,这对于运行时动态使用的模型很有用:

spring:

ai:

ollama:

init:

pull-model-strategy: always

chat:

additional-models:

- llama3.2

- qwen2.5如果您只想将拉取策略应用于特定类型的模型,您可以将聊天模型从初始化任务中排除:

spring:

ai:

ollama:

init:

pull-model-strategy: always

chat:

include: false此配置将拉取策略应用于除聊天模型之外的所有模型。

函数调用

您可以使用 OllamaChatModel 注册自定义 Java 函数,并让 Ollama 模型智能地选择输出一个 JSON 对象,其中包含调用一个或多个已注册函数的参数。

这是一种将 LLM 功能与外部工具和 API 连接的强大技术。

阅读更多关于 工具调用 的信息。

|

您需要 Ollama 0.2.8 或更高版本才能使用函数调用功能,以及 Ollama 0.4.6 或更高版本才能在流式模式下使用它们。 |

多模态

多模态是指模型同时理解和处理来自各种来源(包括文本、图像、音频和其他数据格式)信息的能力。

Ollama 中一些支持多模态的模型是 LLaVA 和 BakLLaVA(请参阅 完整列表)。 有关更多详细信息,请参阅 LLaVA:大型语言和视觉助手。

Ollama 消息 API 提供了一个“images”参数,用于将 base64 编码的图像列表与消息一起包含。

Spring AI 的 Message 接口通过引入 Media 类型来促进多模态 AI 模型。

此类型包含有关消息中媒体附件的数据和详细信息,利用 Spring 的 org.springframework.util.MimeType 和 org.springframework.core.io.Resource 用于原始媒体数据。

以下是从 OllamaChatModelMultimodalIT.java 摘录的简单代码示例,说明了用户文本与图像的融合。

var imageResource = new ClassPathResource("/multimodal.test.png");

var userMessage = new UserMessage("Explain what do you see on this picture?",

new Media(MimeTypeUtils.IMAGE_PNG, this.imageResource));

ChatResponse response = chatModel.call(new Prompt(this.userMessage,

OllamaOptions.builder().model(OllamaModel.LLAVA)).build());该示例显示了一个模型将 multimodal.test.png 图像作为输入:

以及文本消息“Explain what do you see on this picture?”,并生成如下响应:

The image shows a small metal basket filled with ripe bananas and red apples. The basket is placed on a surface, which appears to be a table or countertop, as there's a hint of what seems like a kitchen cabinet or drawer in the background. There's also a gold-colored ring visible behind the basket, which could indicate that this photo was taken in an area with metallic decorations or fixtures. The overall setting suggests a home environment where fruits are being displayed, possibly for convenience or aesthetic purposes.

结构化输出

Ollama 提供自定义的 结构化输出 API,确保您的模型生成严格符合您提供的 JSON Schema 的响应。

除了现有的 Spring AI 模型无关的 结构化输出转换器 之外,这些 API 还提供了增强的控制和精度。

配置

Spring AI 允许您使用 OllamaOptions 构建器以编程方式配置响应格式。

使用聊天选项构建器

您可以使用 OllamaOptions 构建器以编程方式设置响应格式,如下所示:

String jsonSchema = """

{

"type": "object",

"properties": {

"steps": {

"type": "array",

"items": {

"type": "object",

"properties": {

"explanation": { "type": "string" },

"output": { "type": "string" }

},

"required": ["explanation", "output"],

"additionalProperties": false

}

},

"final_answer": { "type": "string" }

},

"required": ["steps", "final_answer"],

"additionalProperties": false

}

""";

Prompt prompt = new Prompt("how can I solve 8x + 7 = -23",

OllamaOptions.builder()

.model(OllamaModel.LLAMA3_2.getName())

.format(new ObjectMapper().readValue(jsonSchema, Map.class))

.build());

ChatResponse response = this.ollamaChatModel.call(this.prompt);与 BeanOutputConverter 实用程序集成

您可以利用现有的 BeanOutputConverter 实用程序,从您的领域对象自动生成 JSON Schema,然后将结构化响应转换为领域特定的实例:

record MathReasoning(

@JsonProperty(required = true, value = "steps") Steps steps,

@JsonProperty(required = true, value = "final_answer") String finalAnswer) {

record Steps(

@JsonProperty(required = true, value = "items") Items[] items) {

record Items(

@JsonProperty(required = true, value = "explanation") String explanation,

@JsonProperty(required = true, value = "output") String output) {

}

}

}

var outputConverter = new BeanOutputConverter<>(MathReasoning.class);

Prompt prompt = new Prompt("how can I solve 8x + 7 = -23",

OllamaOptions.builder()

.model(OllamaModel.LLAMA3_2.getName())

.format(outputConverter.getJsonSchemaMap())

.build());

ChatResponse response = this.ollamaChatModel.call(this.prompt);

String content = this.response.getResult().getOutput().getText();

MathReasoning mathReasoning = this.outputConverter.convert(this.content);|

请确保使用 |

OpenAI API 兼容性

Ollama 与 OpenAI API 兼容,您可以使用 Spring AI OpenAI 客户端与 Ollama 对话并使用工具。

为此,您需要将 OpenAI 基本 URL 配置为您的 Ollama 实例:spring.ai.openai.chat.base-url=http://localhost:11434 并选择一个提供的 Ollama 模型:spring.ai.openai.chat.options.model=mistral。

查看 OllamaWithOpenAiChatModelIT.java 测试,了解通过 Spring AI OpenAI 使用 Ollama 的示例。

HuggingFace 模型

Ollama 可以开箱即用地访问所有 GGUF Hugging Face 聊天模型。

您可以按名称拉取这些模型中的任何一个:ollama pull hf.co/<username>/<model-repository> 或配置自动拉取策略:auto-pulling-models:

spring.ai.ollama.chat.options.model=hf.co/bartowski/gemma-2-2b-it-GGUF

spring.ai.ollama.init.pull-model-strategy=always-

spring.ai.ollama.chat.options.model:指定要使用的 Hugging Face GGUF 模型。 -

spring.ai.ollama.init.pull-model-strategy=always:(可选)在启动时启用自动模型拉取。 对于生产环境,您应该预先下载模型以避免延迟:ollama pull hf.co/bartowski/gemma-2-2b-it-GGUF。

示例控制器

创建 一个新的 Spring Boot 项目,并将 spring-ai-starter-model-ollama 添加到您的 pom(或 gradle)依赖项中。

在 src/main/resources 目录下添加一个 application.yaml 文件,以启用和配置 Ollama 聊天模型:

spring:

ai:

ollama:

base-url: http://localhost:11434

chat:

options:

model: mistral

temperature: 0.7|

将 |

这将创建一个 OllamaChatModel 实现,您可以将其注入到您的类中。

这是一个简单的 @RestController 类的示例,它使用聊天模型进行文本生成。

@RestController

public class ChatController {

private final OllamaChatModel chatModel;

@Autowired

public ChatController(OllamaChatModel chatModel) {

this.chatModel = chatModel;

}

@GetMapping("/ai/generate")

public Map<String,String> generate(@RequestParam(value = "message", defaultValue = "Tell me a joke") String message) {

return Map.of("generation", this.chatModel.call(message));

}

@GetMapping("/ai/generateStream")

public Flux<ChatResponse> generateStream(@RequestParam(value = "message", defaultValue = "Tell me a joke") String message) {

Prompt prompt = new Prompt(new UserMessage(message));

return this.chatModel.stream(prompt);

}

}手动配置

如果您不想使用 Spring Boot 自动配置,您可以在应用程序中手动配置 OllamaChatModel。

OllamaChatModel 实现了 ChatModel 和 StreamingChatModel,并使用 low-level-api 连接到 Ollama 服务。

要使用它,请将 spring-ai-ollama 依赖项添加到您的项目的 Maven pom.xml 或 Gradle build.gradle 构建文件中:

-

Maven

-

Gradle

<dependency>

<groupId>org.springframework.ai</groupId>

<artifactId>spring-ai-ollama</artifactId>

</dependency>dependencies {

implementation 'org.springframework.ai:spring-ai-ollama'

}|

请参阅 依赖管理 部分,将 Spring AI BOM 添加到您的构建文件中。 |

|

|

接下来,创建一个 OllamaChatModel 实例并使用它发送文本生成请求:

var ollamaApi = OllamaApi.builder().build();

var chatModel = OllamaChatModel.builder()

.ollamaApi(ollamaApi)

.defaultOptions(

OllamaOptions.builder()

.model(OllamaModel.MISTRAL)

.temperature(0.9)

.build())

.build();

ChatResponse response = this.chatModel.call(

new Prompt("Generate the names of 5 famous pirates."));

// Or with streaming responses

Flux<ChatResponse> response = this.chatModel.stream(

new Prompt("Generate the names of 5 famous pirates."));OllamaOptions 提供了所有聊天请求的配置信息。

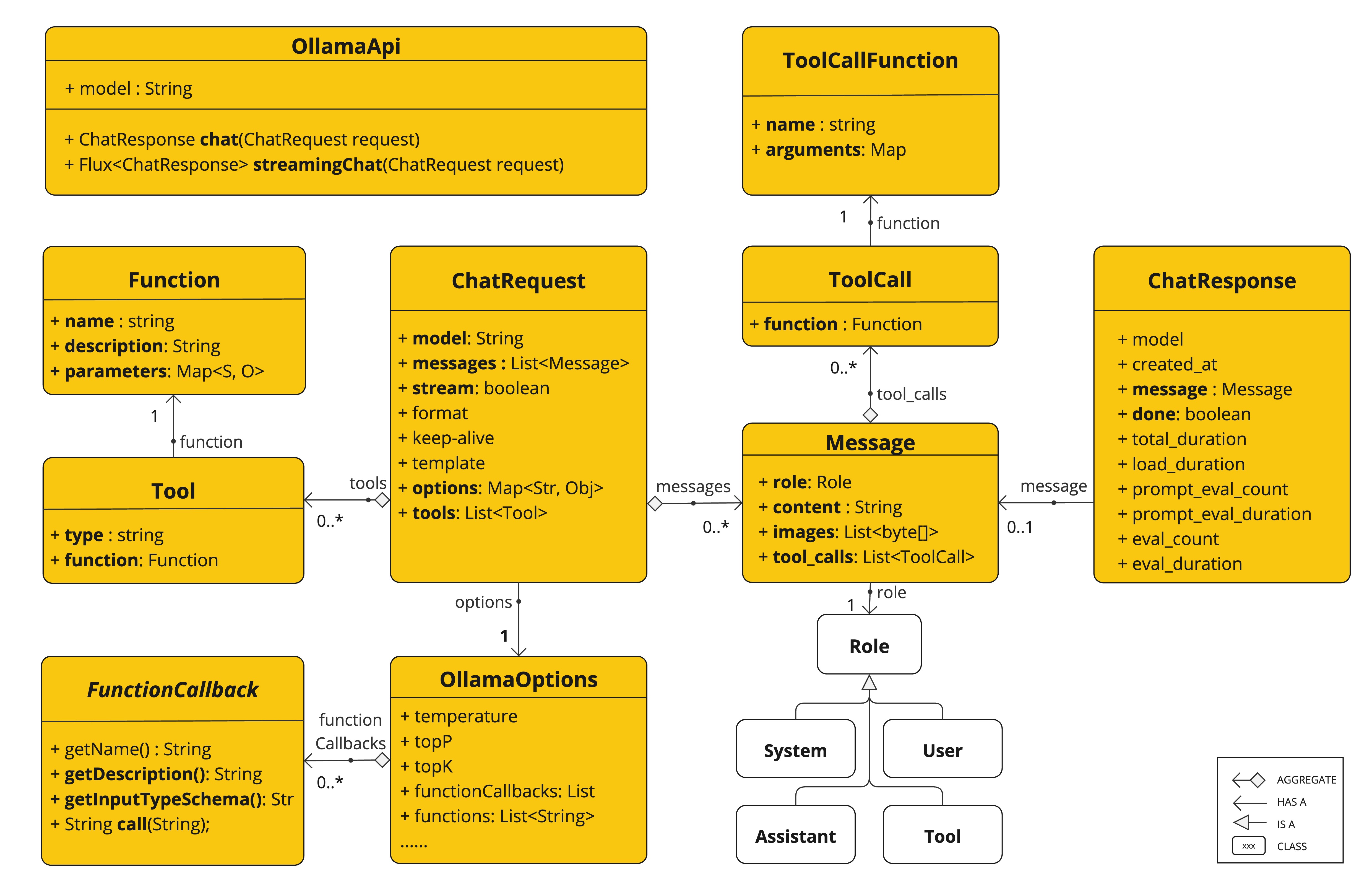

低级 OllamaApi 客户端

OllamaApi 为 Ollama 聊天完成 API Ollama 聊天完成 API 提供了一个轻量级 Java 客户端。

以下类图说明了 OllamaApi 聊天接口和构建块:

|

|

以下是一个简单的代码片段,展示了如何以编程方式使用 API:

OllamaApi ollamaApi = new OllamaApi("YOUR_HOST:YOUR_PORT");

// Sync request

var request = ChatRequest.builder("orca-mini")

.stream(false) // not streaming

.messages(List.of(

Message.builder(Role.SYSTEM)

.content("You are a geography teacher. You are talking to a student.")

.build(),

Message.builder(Role.USER)

.content("What is the capital of Bulgaria and what is the size? "

+ "What is the national anthem?")

.build()))

.options(OllamaOptions.builder().temperature(0.9).build())

.build();

ChatResponse response = this.ollamaApi.chat(this.request);

// Streaming request

var request2 = ChatRequest.builder("orca-mini")

.ttream(true) // streaming

.messages(List.of(Message.builder(Role.USER)

.content("What is the capital of Bulgaria and what is the size? " + "What is the national anthem?")

.build()))

.options(OllamaOptions.builder().temperature(0.9).build().toMap())

.build();

Flux<ChatResponse> streamingResponse = this.ollamaApi.streamingChat(this.request2);