英伟达聊天

NVIDIA LLM API 是一个代理 AI 推理引擎,提供来自 各种提供商 的广泛模型。

Spring AI 通过重用现有的 OpenAI 客户端与 NVIDIA LLM API 集成。

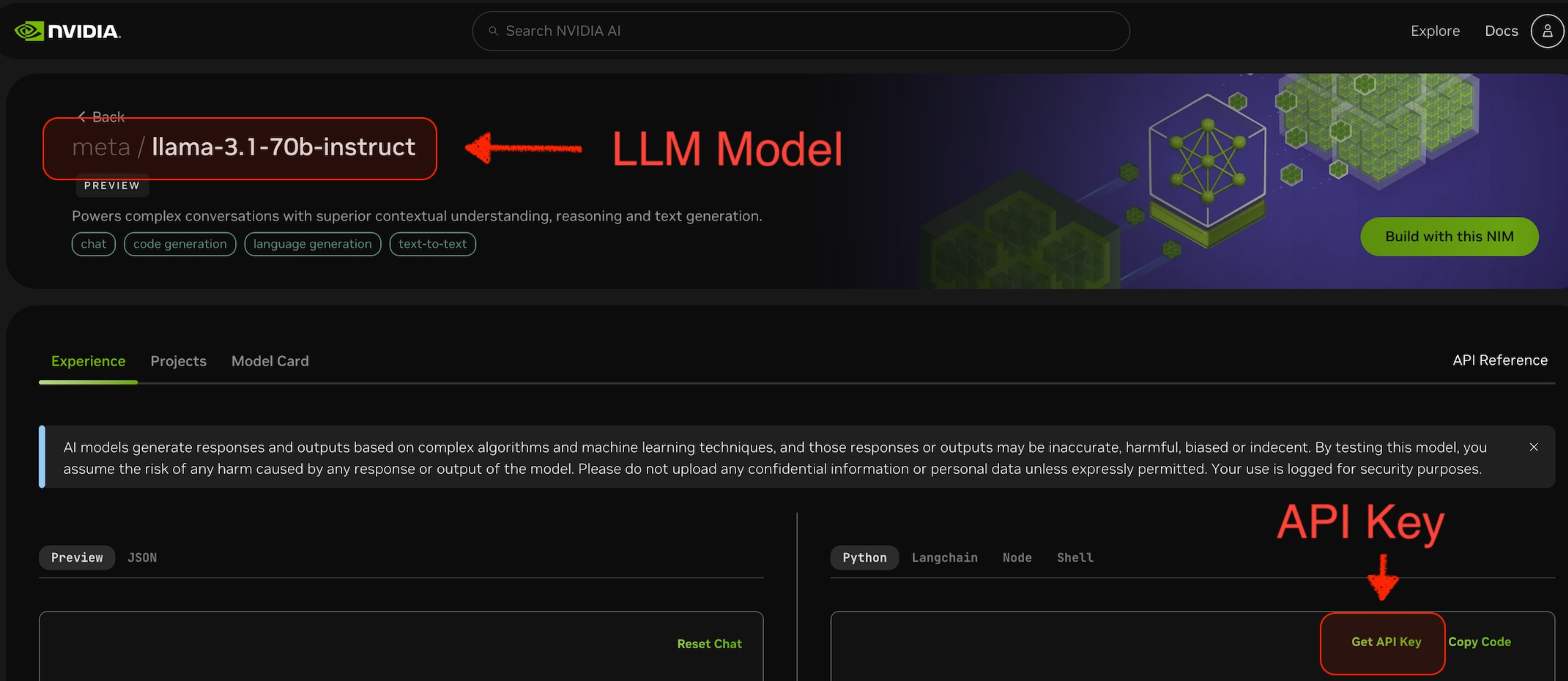

为此,您需要将 base-url 设置为 https://integrate.api.nvidia.com,选择一个提供的 LLM 模型,并为其获取 api-key。

image::spring-ai-nvidia-llm-api-1.jpg[]

|

NVIDIA LLM API 要求明确设置 |

查看 NvidiaWithOpenAiChatModelIT.java 测试 以获取使用 Spring AI 调用 NVIDIA LLM API 的示例。

先决条件

-

创建具有足够信用额度的 NVIDIA 账户。

-

选择一个要使用的 LLM 模型。例如,下图中的

meta/llama-3.1-70b-instruct。 -

从所选模型的页面,您可以获取用于访问该模型的

api-key。

自动配置

|

Spring AI 自动配置、启动器模块的 artifact 名称发生了重大变化。 请参阅 升级说明 以获取更多信息。 |

Spring AI 为 OpenAI 聊天客户端提供 Spring Boot 自动配置。

要启用它,请将以下依赖项添加到您的项目的 Maven pom.xml 文件中:

<dependency>

<groupId>org.springframework.ai</groupId>

<artifactId>spring-ai-starter-model-openai</artifactId>

</dependency>或添加到您的 Gradle build.gradle 构建文件中。

dependencies {

implementation 'org.springframework.ai:spring-ai-starter-model-openai'

}|

请参阅 依赖管理 部分,将 Spring AI BOM 添加到您的构建文件中。 |

聊天属性

重试属性

spring.ai.retry 前缀用作属性前缀,允许您配置 OpenAI 聊天模型的重试机制。

| 属性 | 描述 | 默认值 |

|---|---|---|

spring.ai.retry.max-attempts |

最大重试次数。 |

10 |

spring.ai.retry.backoff.initial-interval |

指数退避策略的初始休眠持续时间。 |

2 秒。 |

spring.ai.retry.backoff.multiplier |

退避间隔乘数。 |

5 |

spring.ai.retry.backoff.max-interval |

最大退避持续时间。 |

3 分钟。 |

spring.ai.retry.on-client-errors |

如果为 false,则抛出 NonTransientAiException,并且不尝试重试 |

false |

spring.ai.retry.exclude-on-http-codes |

不应触发重试(例如抛出 NonTransientAiException)的 HTTP 状态码列表。 |

空 |

spring.ai.retry.on-http-codes |

应触发重试(例如抛出 TransientAiException)的 HTTP 状态码列表。 |

空 |

连接属性

spring.ai.openai 前缀用作属性前缀,允许您连接到 OpenAI。

| 属性 | 描述 | 默认值 |

|---|---|---|

spring.ai.openai.base-url |

要连接的 URL。必须设置为 |

- |

spring.ai.openai.api-key |

NVIDIA API 密钥 |

- |

配置属性

|

聊天自动配置的启用和禁用现在通过前缀为 |

spring.ai.openai.chat 前缀是属性前缀,允许您配置 OpenAI 的聊天模型实现。

| 属性 | 描述 | 默认值 |

|---|---|---|

spring.ai.openai.chat.enabled(已移除且不再有效) |

启用 OpenAI 聊天模型。 |

true |

spring.ai.model.chat |

启用 OpenAI 聊天模型。 |

openai |

spring.ai.openai.chat.base-url |

可选覆盖 spring.ai.openai.base-url 以提供聊天专用 URL。必须设置为 |

- |

spring.ai.openai.chat.api-key |

可选覆盖 spring.ai.openai.api-key 以提供聊天专用 api-key |

- |

spring.ai.openai.chat.options.model |

要使用的 NVIDIA LLM 模型 |

- |

spring.ai.openai.chat.options.temperature |

用于控制生成补全的感知创造性的采样温度。值越高,输出越随机,而值越低,结果越集中和确定。不建议在同一补全请求中修改 temperature 和 top_p,因为这两个设置的交互难以预测。 |

0.8 |

spring.ai.openai.chat.options.frequencyPenalty |

介于 -2.0 和 2.0 之间的数字。正值会根据新令牌在文本中出现的现有频率对其进行惩罚,从而降低模型逐字重复同一行的可能性。 |

0.0f |

spring.ai.openai.chat.options.maxTokens |

在聊天补全中生成的最大令牌数。输入令牌和生成令牌的总长度受模型的上下文长度限制。 |

注意:NVIDIA LLM API 要求明确设置 |

spring.ai.openai.chat.options.n |

为每个输入消息生成多少个聊天补全选项。请注意,您将根据所有选项中生成的令牌数量收费。将 n 保持为 1 以最小化成本。 |

1 |

spring.ai.openai.chat.options.presencePenalty |

介于 -2.0 和 2.0 之间的数字。正值会根据新令牌是否出现在文本中对其进行惩罚,从而增加模型谈论新主题的可能性。 |

- |

spring.ai.openai.chat.options.responseFormat |

指定模型必须输出的格式的对象。设置为 |

- |

spring.ai.openai.chat.options.seed |

此功能处于 Beta 阶段。如果指定,我们的系统将尽力确定性地采样,以便具有相同种子和参数的重复请求应返回相同的结果。 |

- |

spring.ai.openai.chat.options.stop |

最多 4 个序列,API 将停止生成进一步的令牌。 |

- |

spring.ai.openai.chat.options.topP |

采样温度的替代方案,称为核采样,模型考虑具有 top_p 概率质量的令牌结果。因此 0.1 意味着只考虑构成前 10% 概率质量的令牌。我们通常建议更改此项或温度,但不要同时更改两者。 |

- |

spring.ai.openai.chat.options.tools |

模型可以调用的一系列工具。目前,仅支持函数作为工具。使用此项提供模型可以生成 JSON 输入的函数列表。 |

- |

spring.ai.openai.chat.options.toolChoice |

控制模型调用哪个(如果有)函数。 none 意味着模型不会调用函数,而是生成一条消息。 auto 意味着模型可以在生成消息或调用函数之间进行选择。通过 |

- |

spring.ai.openai.chat.options.user |

代表您的最终用户的唯一标识符,可以帮助 OpenAI 监控和检测滥用。 |

- |

spring.ai.openai.chat.options.functions |

函数列表,通过其名称标识,用于在单个提示请求中启用函数调用。具有这些名称的函数必须存在于 functionCallbacks 注册表中。 |

- |

spring.ai.openai.chat.options.stream-usage |

(仅用于流式传输)设置为添加一个额外的块,其中包含整个请求的令牌使用统计信息。此块的 |

false |

spring.ai.openai.chat.options.proxy-tool-calls |

如果为 true,Spring AI 将不会在内部处理函数调用,而是将它们代理到客户端。然后,客户端负责处理函数调用,将它们分派到适当的函数,并返回结果。如果为 false(默认值),Spring AI 将在内部处理函数调用。仅适用于支持函数调用的聊天模型 |

false |

|

所有带有 |

运行时选项

OpenAiChatOptions.java 提供模型配置,例如要使用的模型、温度、频率惩罚等。

在启动时,可以使用 OpenAiChatModel(api, options) 构造函数或 spring.ai.openai.chat.options.* 属性配置默认选项。

在运行时,您可以通过向 Prompt 调用添加新的、请求特定的选项来覆盖默认选项。

例如,为特定请求覆盖默认模型和温度:

ChatResponse response = chatModel.call(

new Prompt(

"Generate the names of 5 famous pirates.",

OpenAiChatOptions.builder()

.model("mixtral-8x7b-32768")

.temperature(0.4)

.build()

));|

除了模型特定的 OpenAiChatOptions 之外,您还可以使用通过 ChatOptions#builder() 创建的便携式 ChatOptions 实例。 |

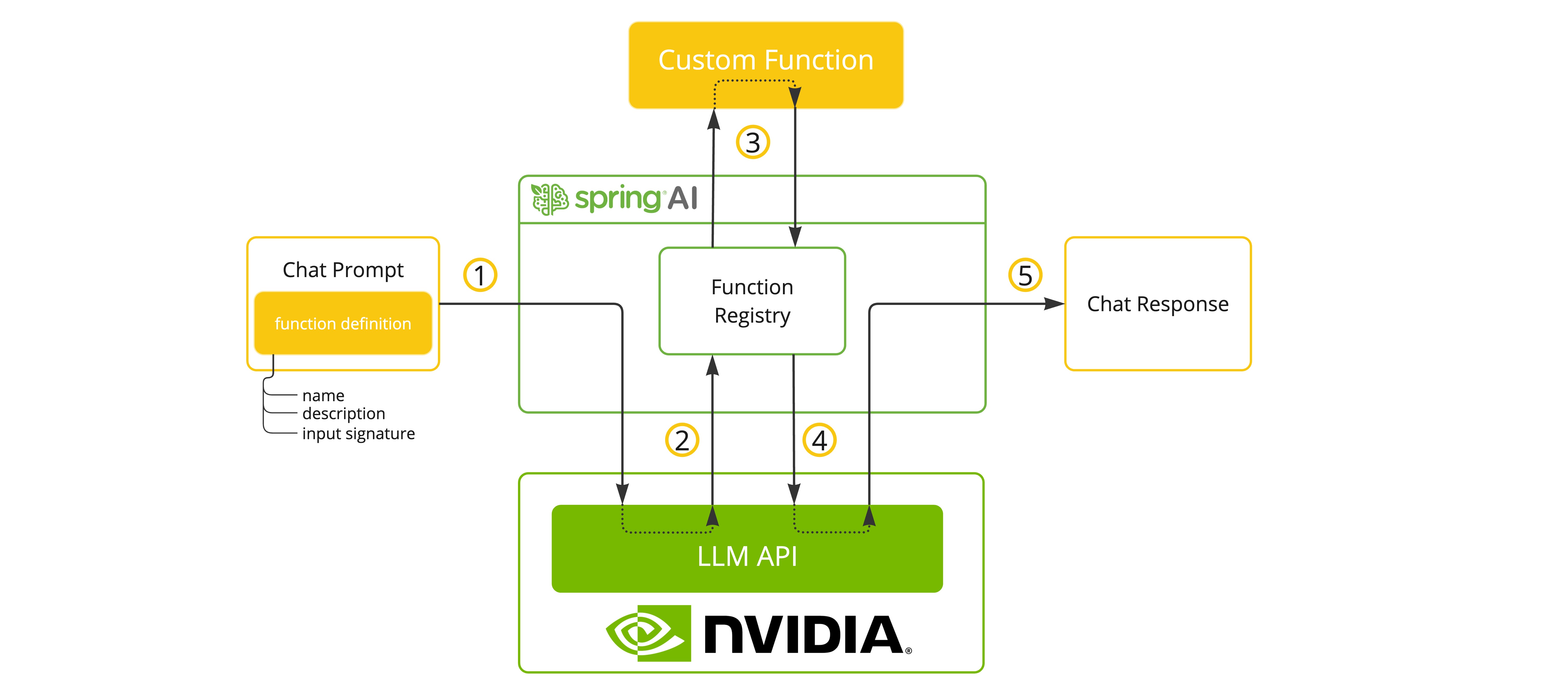

函数调用

NVIDIA LLM API 在选择支持工具/函数调用的模型时支持此功能。

您可以向 ChatModel 注册自定义 Java 函数,并让提供的模型智能地选择输出一个 JSON 对象,其中包含调用一个或多个已注册函数的参数。 这是一种将 LLM 功能与外部工具和 API 连接起来的强大技术。

工具示例

以下是使用 Spring AI 调用 NVIDIA LLM API 函数的简单示例:

spring.ai.openai.api-key=${NVIDIA_API_KEY}

spring.ai.openai.base-url=https://integrate.api.nvidia.com

spring.ai.openai.chat.options.model=meta/llama-3.1-70b-instruct

spring.ai.openai.chat.options.max-tokens=2048@SpringBootApplication

public class NvidiaLlmApplication {

public static void main(String[] args) {

SpringApplication.run(NvidiaLlmApplication.class, args);

}

@Bean

CommandLineRunner runner(ChatClient.Builder chatClientBuilder) {

return args -> {

var chatClient = chatClientBuilder.build();

var response = chatClient.prompt()

.user("What is the weather in Amsterdam and Paris?")

.functions("weatherFunction") // reference by bean name.

.call()

.content();

System.out.println(response);

};

}

@Bean

@Description("Get the weather in location")

public Function<WeatherRequest, WeatherResponse> weatherFunction() {

return new MockWeatherService();

}

public static class MockWeatherService implements Function<WeatherRequest, WeatherResponse> {

public record WeatherRequest(String location, String unit) {}

public record WeatherResponse(double temp, String unit) {}

@Override

public WeatherResponse apply(WeatherRequest request) {

double temperature = request.location().contains("Amsterdam") ? 20 : 25;

return new WeatherResponse(temperature, request.unit);

}

}

}在此示例中,当模型需要天气信息时,它将自动调用 weatherFunction bean,然后该 bean 可以获取实时天气数据。

预期的响应如下所示:“阿姆斯特丹目前的气温为 20 摄氏度,巴黎目前的气温为 25 摄氏度。”

阅读有关 OpenAI 函数调用 的更多信息。

示例控制器

创建 一个新的 Spring Boot 项目,并将 spring-ai-starter-model-openai 添加到您的 pom(或 gradle)依赖项中。

在 src/main/resources 目录下添加 application.properties 文件,以启用和配置 OpenAi 聊天模型:

spring.ai.openai.api-key=${NVIDIA_API_KEY}

spring.ai.openai.base-url=https://integrate.api.nvidia.com

spring.ai.openai.chat.options.model=meta/llama-3.1-70b-instruct

# The NVIDIA LLM API doesn't support embeddings, so we need to disable it.

spring.ai.openai.embedding.enabled=false

# The NVIDIA LLM API requires this parameter to be set explicitly or server internal error will be thrown.

spring.ai.openai.chat.options.max-tokens=2048|

将 |

|

NVIDIA LLM API 要求明确设置 |

以下是一个使用聊天模型进行文本生成的简单 @Controller 类的示例。

@RestController

public class ChatController {

private final OpenAiChatModel chatModel;

@Autowired

public ChatController(OpenAiChatModel chatModel) {

this.chatModel = chatModel;

}

@GetMapping("/ai/generate")

public Map generate(@RequestParam(value = "message", defaultValue = "Tell me a joke") String message) {

return Map.of("generation", this.chatModel.call(message));

}

@GetMapping("/ai/generateStream")

public Flux<ChatResponse> generateStream(@RequestParam(value = "message", defaultValue = "Tell me a joke") String message) {

Prompt prompt = new Prompt(new UserMessage(message));

return this.chatModel.stream(prompt);

}

}